Deep learning systems have significantly evolved in 2020, with trends shifting towards Bayesian neural networks, reinforcement learning, transfer learning, advanced computer architecture, and chip design with a gleeful and triumphant series of developments, including speech recognition and natural language processing. The reinforcement learning continues to make an ongoing loop of innovation in dynamic landscapes, including embedded systems and FPGA and GPU accelerators, taking over extreme learning methods. The natural language processing with GPT-3 continues to look to expand the horizons to GPT-4 in 2021, taking over machine translation, text and voice processing, information extraction, and text mining.

The outbreak of COVID-19 in 2020 has crippled blockchain technology that integrates machine learning and data analytics. xAI has a significant future for legal and auditory requirements for enterprises and the compliance of government regulations. As COVID-19 vaccines start hitting each city this month, there should be more project developments in blockchain and cryptocurrency technologies with artificial intelligence. Blockchain has become the new normal in the modern world for resilience in supply chains, producers, and consumers. IBM and Maersk partnered to create blockchain tools for digital supply chain networks in 2020 to reach the critical mass reliant on blockchain-based supply chain networks.

Big data

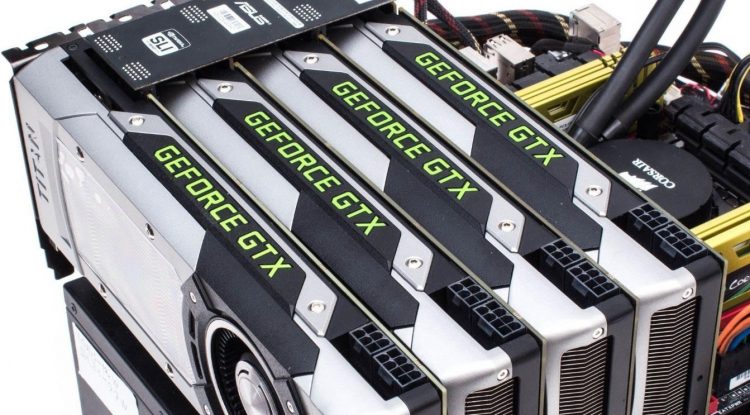

According to the ResearchAndMarkets ‘Big Data – Global Market Trajectory & Analytics,’ the big data market showed a market of US$70.5 Billion amid Covid-19 crisis in 2020. Big data projected to reach the US $243 Billion by 2027 buoyed by the data from 1010data Inc, Accenture, Actian, Cazena, Cloudera Inc, Datameer, Dell EMC, Fujitsu, Google, Guavus, Hewlett Packard, Hortonworks Inc, IBM, MapR, Microsoft, Oracle, Palantir Technologies, Ryft Systems, SAP, Splunk, and Teradata Corporation. GEForce GPUs crushed the market in 2020, hitting 80% of the market PC GPU discrete segments. The stocks of Nvidia with GEForce from $174 from October 2019 rose to $589 in November 2020 to an all-time high with 238% returns during Covid-19.

Linux

Automotive Grade Linux has released the 10th version of the code base for automakers dubbed “Jumping Jellyfish,” from the Linux Foundation. As the world continues to run machine learning applications on Windows or Mac OSX, there are a vast number of enterprises that run Linux servers on the web, and the Linux operating system is available as an open-source code for any person to modify it and as they require it. Linux comes in many distributions. Android phones run Linux versions, and so do the Chromebooks. There are many versions of Ubuntu Linux. Ubuntu Christian Edition is a free ecosystem and has been a bedrock project geared towards conservative Christians. It comes in both 32-bit and 64-bit PCs.

Christian edition includes and provides all the desktop applications from Word processing, spreadsheet applications, web server applications, and software programming tools hosted for machine learning. DansGuardian provides award-winning parental controls for the web content setting Ubuntu Christian Edition, a differentiator from the rest of the Linux distros. According to Orbis Research Group and Fortune Business Insights, the Linux operating system has been the cornerstone that catalyzes machine learning platforms. Big data expected to reach $15 billion by 2027. Tesla leverages their version of Linux as an embedded system; many other automakers work with Linux Foundation on Automotive Grade Linux for the connected car to handle the deluge of big data, smart IoT systems, telematics, and autonomous driving systems.

Image courtesy: Andreas Lundqvist Licensed under GFDL 1.3

Subscribe to our Newsletter

Get the latest updates and relevant offers by sharing your email.

Machine learning

xAI

The black-box style architecture of algorithms has created problems in understanding the AI’s fairness for credit scores for financial companies and understanding the early warning scores for acute illnesses for the clinicians. Building neural networks for machine learning models create complex problems for the interpretability of the model.

Several machine learning SDKs such as SHAP (SHapley Additive exPlanations) from Microsoft Azure Machine Learning vows to improve the interpretability of the datasets for the data scientists to ensure the enterprises comply with the best practices and principles of healthcare, life sciences, retail, and the regulations of the government. The stakeholders consuming AI decisions require transparency for maintaining the trust and reliability of the algorithms in machine learning. It’s easier to understand the machine learning models’ behaviour by debugging and validating legal and auditory compliance. The enterprises can install Azure SHAP SDK for the machine learning model’s interpretability in its entirety or each data point. Azure has developed SHAP explainer with interpretability techniques. The SHAP tree explainer delves into the machine learning model’s interpretability with decision trees and an ensemble of trees. SHAP Kernel explainer is model-agnostic and leverages local linear regression technique for estimating SHAP values for any machine learning model.

Additive feature attribution method

The additive feature attribution method is a simpler explanation model that defines the interpretability of the original model. There are around six exploration methods. We will look at the three explainer methods primarily. For the additive feature attribution method, let’s define f as the original prediction model with an explanation model as k if we consider a local approach to explain the prediction f(a), based on the exploration method LIME. If we map the input of the method a𝜄 to the original input with the mapping function a = ha(a𝜄). The local methods of the prediction model will have for the explanation k(c𝜄) ≈ f(ha(c𝜄), where c𝜄 ≈ a𝜄.

A data scientist can define the linear function of the binary variable in the additive feature attribution method as

Where, c𝜄 ∈ {0,1}M , M = number of the simplified input features and 𝛉I ∈ ℝ.

LIME

LIME xAI method mainly interprets the locality of the model approximation with individual predictions of the machine learning models. LIME adheres to the additive feature attribution method’s equation with input a𝜄 considering these as the inputs for interpretability by mapping a𝜄 to the original function a = ha(a𝜄). This function will convert the binary vector of the interpretable inputs into the initial input. In natural language processing, the text features for a bag of words, ha would convert the vectors of 1’0s or 0’s into the word count of the original input space. To find the 𝛉, we can minimize the LIME objective function as follows:

DeepLIFT

DeepLIFT has been considered and implemented for interpreting deep learning neural network models. The DeepLIFT leverages the input as ai for a value C𝛁ai𝛁o = 𝛁o.

A data scientist can represent the summation equation as:

Microsoft Azure xAI interpretability techniques.

MLOps

The core fundamentals of MLOps derived from the DevOps lifecycle. In a traditional DevOps lifecycle, the executable source code is built on the development or sandbox environments and goes through the build-deploy-monitor lifecycle for software engineering applications. Implementing CI/CD/CT continuous pipeline of software applications differ from implementing the MLOps lifecycle. The ML systems are stochastic and non-deterministic and hence are heavily impacted by the datasets for the ML practitioners and subject to vary from time to time.

The constant change in the machine learning lifecycle has raised the need for MLOps for redeployments of machine learning models. Most of the time, enterprises implementing MLOps embrace an MLOps strategy with a varying degree. Google’s MLOps guidelines provide instructions to create three levels of MLOps by various enterprises.

MLOPs Level 0: Under this level, there is a manual process for machine learning model training and deployment and deployed through scripts and interactive techniques. However, Level 0 does have a CI/CD pipeline due to the manual process.

MLOps Level 1: Under this level, there is some automation, where the models go through continuous training, including the data pipelines and validation processes. The machine learning models get retrained based on the latest datasets availability whenever the performance is latent.

MLOps Level 2: This pipeline looks similar to the DevOps lifecycle where CI/CD pipelines are automated. The data scientists can update the machine learning models without manual processes from machine learning and deep learning engineers.

Qualcomm depiction of parallel training of shadow datasets.

As we see in the following diagram, an organization can adopt a number of datasets and perform parallel training on the shadow datasets and build a potential candidate as a machine learning model for production deployment in stark contrast to the IT DevOps lifecycle.

Qualcomm Neural processing engine MLOps workflow.

If you loved this story, do join our Telegram Community.

Also, you can write for us and be one of the 500+ experts who have contributed stories at AIM. Share your nominations here.