If you are curious about what measures multicollinearity then let me tell you that it’s certainly not the correlation matrix. In this article, we will focus on understanding some very important concepts related to the multicollinearity assumption in linear regression. I will discuss the real reason why multicollinearity is a problem in linear regression. In this article, I will focus on answering questions like:

- What do we mean by multicollinearity among the predictors?

- How do we measure multicollinearity?

- What is VIF and what does it measure? and

- Why is the presence of multicollinear predictors a problem in linear regression analysis?

This article assumes that the readers have some knowledge of linear regression and are familiar with the concepts of correlation, Pearson’s correlation coefficient, linear regression coefficient estimation, linear regression coefficient interpretation, standard errors of the regression coefficients, t-test in linear regression and the fundamental concepts of statistical testing of hypothesis. This article also assumes that the readers are familiar with the term VIF (variance inflation factor) and have probably used it (at least once) to detect multicollinear predictors, but no thorough understanding of VIF and multicollinearity is assumed.

MEASURES OF MULTICOLLINEARITY

If a predictor is highly collinear with several other predictors, then it is probably not adding much information in predicting the target and we may call such a predictor a redundant predictor. This sets the motivation to study how much a predictor is related to the other predictors. In linear regression, multicollinearity refers to the extent to which the predictors are linearly related to the other predictors.

These predictors are also called independent variables. So, if the predictors are independent then they should not be correlated. But that’s just the basic. The presence of multicollinear predictors may cause several problems. In the presence of hard multicollinearity linear regression is not even solvable (unless you use optimization techniques like gradient descent). Interpretations such as ‘with one-unit increase in X, Y increases by b units’ are not possible. However, in this article, I will focus on discussing a very specific problem in linear regression analysis which is caused due to multicollinearity and focus on how a measure like VIF arises in order to identify multicollinearity predictors. But before we do that, let us first discuss two approaches that may be used to detect multicollinearity among the predictors.

- Multiple correlation

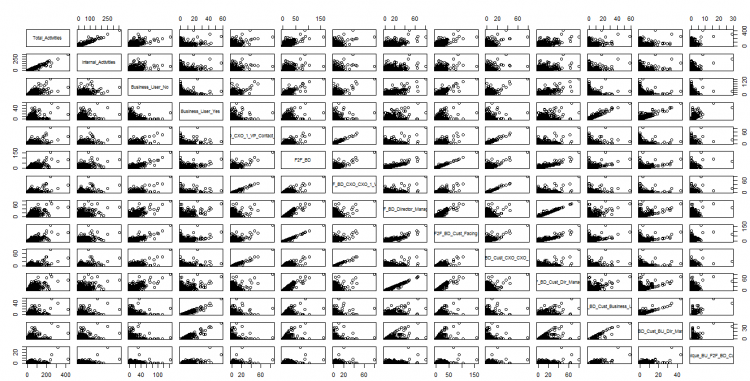

If we are trying to figure out the extent to which a predictor is associated with the other predictors, then looking at the correlation matrix doesn’t help much. Correlation matrix helps us to understand the pairwise associations of the predictors. It doesn’t give us an idea about the extent to which one predictor is correlated with all the other predictors together.

Probably, the most logical approach to analyse multicollinearity of a predictor is to calculate the multiple correlation coefficient between the predictor of our interest and the rest of the predictors. Multiple correlation helps us to study the linear dependence of one variable on a set of some other variables. For example, we may like to study the dependency of the variable X1 on the predictors X2, X3 and X4 together by calculating the correlation cor(X1, X2,X3,X4). This can be done by calculating the simple correlation between X1 and multiple regression equation of X1 given by X2, X3 and X4, i.e.

R1.234=cor(X1, d0+d1X1+d2X2+d3X3+d4X4)

OR R1.234=cor(X1, X1)

Where R1.234 is called the multiple correlation coefficient of X1 on X2, X3 and X4. This can be seen as a measure of joint influence of X2, X3 and X4on X1.

Multiple correlation in R

For the purpose of demonstration, we use the ‘mtcars’ dataset in R.

#To find the multiple correlation between weight and the other predictors

data(mtcars)

#Fitted values of weight by regressing weight on other predictors

#Note that the target variable is mpg

wt.fitted = lm(wt ~ . – mpg, data=mtcars)$fitted.values

#multiple correlation

cor(mtcars$wt, wt.fitted)

## [1] 0.9664669

- R-squared of individual predictors

Consider a problem on linear regression where we have 4 predictors X1,X2, X3 and X4. Let us use Y to denote the target variable. For each predictor Xi, let us fit a linear regression model to regress Xi on the remaining predictors. This will give us four regression models:

Model 1: Regress X1 on X2, X3 and X4

Model 2: Regress X2 on X1, X3 and X4

Model 3: Regress X3 on X1, X2 and X4

Model 4: Regress X4 on X1, X2 and X3

Let Ri2 denote the R-squared value of the ith model. Then the value of Ri2 is interpreted as follows – Ri2 is the proportion of variance of the variable Xi explained by the other predictors using the ith model. For example, if Ri2 = 0.90, then this would mean that, 90% of variance of X1 is explained by the variables X2, X3 and X4 using model 1. If the value of Ri2 is high, as being discussed in this case, then this would indicate that the ith predictor is redundant because most of its variance is explained by the other predictors. In other words, in the presence of the other predictors the ith predictor does not provide any additional information to explain Y. Therefore, it would be advisable to remove such redundant predictors from the model.

Mathematically, Ri2 can be shown as the square of the multiple correlation between the predictor Xi and the rest of the predictors, i.e.,

Ri2= Ri.12…i-1i+1…k2

Where, k is the total number of predictors and Ri.12…i-1i+1…k2 is the multiple correlation coefficient of Xi on the remaining (k-1) predictors. This is a very interesting relationship. This is the reason why R-squared is also known as multiple R-squared. Since, R-squared is the square of multiple correlation, it ranges between 0 and 1. If a predictor is highly collinear with the other predictors then the multiple correlation between the predictor and the rest of the predictors will be closer to +1 or -1 and the value of Ri2 will be closer to 1.

#Quick Check: R^2 = (multiple correlation)^2

model = lm(mpg ~ ., mtcars)

summary(model)$r.squared

## [1] 0.8690158

#Multiple correlation between MPG and the predictors

cor(mtcars$mpg, model$fitted.values)

## [1] 0.9322102

#Square of the multiple correlation

cor(mtcars$mpg, model$fitted.values)^2

## [1] 0.8690158

#which is equal to the R-square

- VIF (Variance Inflation Factor)

The VIF or the Variance Inflation Factor for the predictor Xi is calculated using the following formula:

VIFi= 11- Ri2

From the above formula it is not difficult to realize that VIFi is directly proportional to Ri2 and it ranges between 1 and infinity. That means, higher the value of Ri2 the higher will be the value of VIFi. So a high value of VIFi will indicate that the ith predictor is highly multicollinear with the other predictors.

Note that, though VIF helps in detecting multicollinearity, it is not a measure of multicollinearity. In the next section, we will discuss some details about the formula of VIF and talk about why this formula is called variance inflation factor.

VARIANCE INFLATION FACTOR (VIF)

The coefficients of linear regression are estimated by minimizing the sum of squares of the residuals (RSS). The RSS depends on the collected sample. Therefore, as the sample changes the estimated values of the coefficient changes as well. This dispersion of the linear regression coefficients over different samples is captured by calculating the standard errors of the regression coefficients. The standard errors of the linear regression coefficients are calculated using the following formula.

SE2(bi)= 21- Ri2i=1nxi-x2 … (1)

Ri2 is the value of R2 obtained by regressing Xi on other predictors and 2 is a population parameter which represents the population error variance and is unknown to us. The estimated value of 2 can be obtained using the following formula.

2= 1- Ry2i=1n(yi-y)2n-p-1 … (2)

Where, Ry2 is the value of R2 obtained by regressing Y on the predictors Xi’s. yi is the value of the target variable Y from the ith observation, y is the sample mean of Y, n is the sample size and p is the number of predictors in the model. (The mathematical derivation of this formula is purposefully omitted to simplify the discussion).

Substituting the estimated value of 2bi in equation (2) in place of 2 in equation (1) we get the following equation:

SE2(bi)= 1- Ry2i=1nyi-y2n-p-11- Ri2i=1nxi-x2 … (3)

Rearranging the factors in the above equation will give us,

SE2(bi)= 1- Ry2i=1nyi-y2n-p-1i=1nxi-x2 11- Ri2 … (4)

If Xi is highly correlated with the other predictors then the Ri2 will be high and as a result 11- Ri2 will be high as well. This factor then inflates the variance of the estimate bi. This is the reason why this factor is called the variance inflation factor (VIF).

Let us do a quick check using a software to see how this formula gives the estimated standard error of a linear regression coefficient.

Quick check using R

#Let

#Target: mpg

#Predictors: wt, hp, cyl, disp

#Fit a linear regression model to regress mpg on the predictors and obtain R^2 of the model

model = lm(mpg ~ wt + hp + cyl + disp, data=mtcars)

summary(model)$r.squared

## [1] 0.8486348

#R^2 of the model to regress weight on other predictors

summary(lm(wt ~ hp + cyl + disp, data=mtcars))$r.squared

## [1] 0.79373

#Calculation of the estimated variance of the regression coefficient of the variable wt (using formula)

n=32

p=4

y = mtcars$mpg

x = mtcars$wt

numerator = (1-0.8486348)*sum((y-mean(y))^2)

denominator = (n-p-1)*sum((x-mean(x))^2)

VIF = 1/(1-0.79373)

variance_coef_wt = numerator/denominator*VIF

#The estimated standard error of the regression coefficient of the variable wt

sqrt(variance_coef_wt)

## [1] 1.015474

#This value matches with the value given in the linear regression summary table (See the value of Std. Error corresponding to the predictor wt)

summary(model)

##

## Call:

## lm(formula = mpg ~ wt + hp + cyl + disp, data = mtcars)

##

## Residuals:

## Min 1Q Median 3Q Max

## -4.0562 -1.4636 -0.4281 1.2854 5.8269

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 40.82854 2.75747 14.807 1.76e-14 ***

## wt -3.85390 1.01547 -3.795 0.000759 ***

## hp -0.02054 0.01215 -1.691 0.102379

## cyl -1.29332 0.65588 -1.972 0.058947 .

## disp 0.01160 0.01173 0.989 0.331386

## —

## Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ‘ 1

##

## Residual standard error: 2.513 on 27 degrees of freedom

## Multiple R-squared: 0.8486, Adjusted R-squared: 0.8262

## F-statistic: 37.84 on 4 and 27 DF, p-value: 1.061e-10

VIF calculation using R. These is a simple function in R which can help us to calculate VIFs easily. The function is present in the package cars.

#Calculation of VIF (using the vif() function in library ‘car’)

library(car)

vif(lm(mpg~., data=mtcars))

## cyl disp hp drat wt qsec vs

## 15.373833 21.620241 9.832037 3.374620 15.164887 7.527958 4.965873

## am gear carb

## 4.648487 5.357452 7.908747

Interpretation of VIF. This is the number by which the variance of the coefficient of the ith predictor inflates compared to what it would be if Ri = 0, i.e. if the ith predictor is uncorrelated with the other predictors. For example, VIF corresponding to the variable weight is 15.164. This would mean that the variance of the estimated coefficient of the variable weight inflates by 15.164 times compared to what it would be if the variable weight in uncorrelated with the other predictors.

PROBLEMS DUE TO MULTICOLLINEARITY

In linear regression analysis we perform a t-test to test if a predictor is linearly related to the target. The null and alternate hypothesis of this statistical test are:

H0: The predictor is not linearly related to the target

H1: The predictor is linearly related to the target

A smaller p value would lead to a rejection of the null hypothesis.

In order to understand where things may go wrong in the presence of multicollinear predictors let us understand one more concept related to statistical inference called the power of a statistical test.

Power of a statistical test. For most statistical testing of hypothesis problems, we consider that the type 1 error is a serious error. That is, false rejection of null hypothesis is considered more serious compared to a false rejection of the alternate hypothesis (which is the type 2 error). Therefore, before the start of any statistical testing of hypothesis problem we always pre-set the value of (the probability of the type 1 error) to a very small value. You may be very cautious about committing a type 1 error but believe me you are probably more interested in not making a type 2 error.

Let us understand this with a fictitious example – a medicine company has worked very hard over the past few years to come up with a new drug that claims to cure headache within 5 minutes on the average. In order to support this claim using data a hypothesis framework is needed to be designed and the claim is needed to be tested experimentally. Let, denote the average time taken by the medicine to cure headache. Then the null and the alternate hypotheses for this problem can be written as,

H0: μ ≥5

H1: μ <5

Now, we need to test these hypotheses experimentally. For example, to keep things simple, let’s say n subjects who suffer from headaches are chosen at random. These patients are kept under some observations and are prescribed to take this new medicine whenever they suffer from headache. The time (in minutes) taken to cure headache for each individual patients are recorded. A very small value of the average of these durations will lead us to suspect our null hypothesis and will give us a very small p value. (Please note that the above mentioned example is a simplified one and experimental designs has lot more to do than just picking a random sample of patients).

A large p value, a value which is at least greater than the level of significance, will lead us to reject H1. However, the rejection of H1 does not necessary mean that the claim is false. Based on the sample, that’s the best decision we may take. If H1 is rejected falsely then we commit a type 2 error. Committing a type 2 error would indicate that the medicine was truly effective but the effect of the medicine could not be captured from the collected sample. However, as an experimental researcher, you would probably like your statistical tests to be designed in such a way that would enable it to capture the effect. The ability of a statistical testing to capture an effect that is present is called the power of a test. In other words, the probability of a statistical test to accept the alternate hypothesis correctly (or, reject the null hypothesis correctly) is called the power of a test. Therefore, mathematically power of a test can be written as,

Power=P(accepting the true alternate hypothesis)

i.e., Power=1-P(rejecting the true alternate hypothesis)

i.e., Power=1-P(type 2 error)

Therefore, the lesser is the probability of type 2 error the more is the power of the statistical test.

Multicollinearity inflates the variance of the linear regression coefficients. Is that a problem?

Now, let’s come back to our discussion on how the presence of multicollinear predictors affects the linear regression analysis. For that, let us consider the ith regression coefficient bi. If the predictor Xi is multicollinear with the other predictors then this will inflate the standard error (square root of variance) of bi. As a result, the t-value, which is given by the formula,

t= biS.E. (bi)

decreases. This will increase the p value of the test. In this case, we may fail to reject the false null hypothesis correctly and fail to accept the true alternate hypothesis correctly. This increases the probability of committing the type 2 error and hence the power of the statistical test increases.

So, in short, multicollinearity reduced the power of the statistical t-test in linear regression. This may in turn disable us to identify the effect of the individual predictors on the target.