The top 20 search terms used by those in the United States seeking white supremacist material online last year started with “RaHoWa,” short for Racial Holy War and the name of a white power band. Then came “Ku Klux Klan phone number.” Phrases like “how to kill blacks” or “swastika tattoo” fill most of the list.

Amid an upsurge in violent hate attacks, federal law enforcement agencies and other groups have been scrutinizing online activity like internet searches to counteract radicalization.

Now a private start-up company has developed an unusual solution based on ordinary online marketing tools. It sends those who plug extremist search terms into Google to specially designed videos that promote anti-extremist views.

Known as the Redirect Method, it was first used against potential recruits for the Islamic State, but recently it has been repurposed against white supremacy in the United States.

The London-based start-up, Moonshot CVE, has worked with the Anti-Defamation League and Gen Next Foundation, a philanthropic organization, to develop a pilot program tailored for the United States. It ran for several months last summer, and senior counterterrorism officials have endorsed the method.

“I think in general that U.S. government work in the prevention space has been a little bit slow in coming, but this strikes us as a very worthwhile program that should continue,” said Russell E. Travers, acting director of the National Counterterrorism Center. “Anything you can do to stop individuals from consuming the kind of very gruesome radicalization potential that you see on the internet and take them someplace else — just common sense tells me that is a good thing to do.”

Moonshot was created by Vidhya Ramalingam, an American, and Ross Frenett, who first studied extremism in his native Ireland. Both worked at a London think tank that focused on Islamic extremism issues before they founded Moonshot CVE in 2015. (CVE stands for Countering Violent Extremism.)

At that time, most online efforts were geared toward expunging content. Such efforts might interrupt the activity, but did not address the underlying problem as Moonshot seeks to do. “Rather than police content, it would try to disrupt the process of radicalization,” said Clark Hogan-Taylor, the head of communications for Moonshot.

The effort to be unveiled in the coming months in the United States will respond to a wide range of search terms. Moonshot, which previously developed 48 ads, now has 1,064. Five playlists expanded to 86.

Moonshot buys ads like any other company on Google, and earns money from clicks. Sometimes it will self-finance a run, like it did in New Zealand and Australia for 24 hours after attacks on mosques in March, when it knew extremist searches would spike. Other times it gets funding from governments and private companies.

To understand the approach, it is useful to consider another Top 20 search term like “The Turner Diaries,” a dystopian 1978 novel about white supremacists seizing control of the United States.

A search for the “Diaries” could trigger a Google advertisement at the top of the page that says: “Proud of your heritage? | What you are not being told. Find out more information by watching our playlist.”

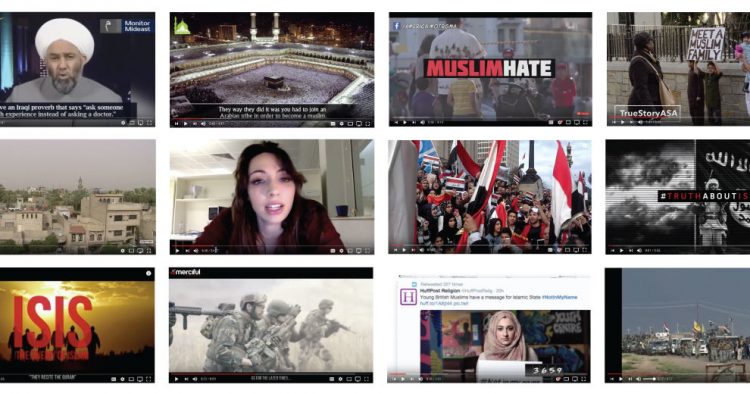

Clicking on the ad would pull up a YouTube playlist of about five to eight short videos consisting of various people, including former extremists, explaining why the ideology is misguided.

The playlist could include a clip from the movie “American History X,” whose white supremacist central character undergoes a transformation after befriending a black man in prison. Former white supremacists have credited the movie with subverting their worldview.

The idea is not to berate the adherents of extremist ideology, but to help them change their minds themselves, said Ludovica di Giorgi, who manages the Redirect Method program.

The company has had trouble raising significant public or private money in the United States to deploy it there, Ms. Di Giorgi said. Aspects of the method have raised civil liberties concerns about a program watching over people’s shoulders. But Moonshot vows that it gets data only on search terms and nothing about individuals.

In Canada, the government awarded Moonshot more than $1.5 million to run the program for 18 months, ending next March. Public Safety Canada, the ministry that deals with terrorism and crime, decided the method was “an innovative attempt” to address extremism online, Tim Warmington, the ministry spokesman, wrote in an email.

The efficacy is difficult to assess, not least because its creators cannot exactly gather a focus group of white supremacists to ask how the method affected their thinking.

“They are applying what commercial marketers do every day, putting Google Ads in front of people,” said Todd C. Helmus, the co-author of a 2018 RAND Corporation paper about measuring the effectiveness of such methods. “The innovation is applying that toward extremism.”

There are some indications the ads are at least being noticed. Someone recently posted comments on a Telegram channel calling the ads proof that Google and YouTube are actively trying to subvert and de-radicalize people. “Boycott the enemy and starve them of your data,” it said.

The rise in white extremist violence has shifted the nature of threat assessments over the past five years, with special attention focused on the psychological makeup of potential recruits, said John D. Cohen, a former homeland security counterterrorism coordinator.

The Moonshot team spends months amassing a database of search terms, uploading a list of some 20,000 that will trigger an ad on Google.

Some are proper names like “Mussolini,” while others are names of white supremacist leaders in particular states. Many search terms were drawn from Nazi Germany, or refer to domestic white supremacist groups like “KKK membership.”

The company also evaluates empathy toward violence: Typing in “Hitler” would not be enough to prompt its tools, but “Hitler Hero” would.

Searches about committing hate crimes surge after attacks like the August shooting at an El Paso Walmart or the 2018 shooting at the Tree of Life synagogue in Pittsburgh. Some of them are as straightforward as they are chilling: “I want to kill blacks” or “I want to kill Jews,” for instance.

Besides countering the pillars of white supremacist ideology, the company also put significant effort into building playlists that challenge the radicalization process.

Take music, for example.

One white supremacist band is called Blink 1488. Its name, similar to that of a popular rock band, is code, with 14 being the number of words in an infamous slogan and 88 meaning “Heil Hitler” since H is the eighth letter of the alphabet.

The band has issued a song called “What’s My Race Again?” with lyrics like “Diversity is just white genocide.”

But a search on Google for the song or the band may lead to this ad: “Are you a fan of Blink 1488? Are you looking for suggestions? Find new music to love and discover new top artists by watching our play list.”

Clicking on an ad like that pulls up playlists of similar genres of music — even mainstream bands — but without hateful lyrics.

Lyrics might seem innocuous but they can help socialize people toward extremism, Ms. Di Giorgi said. “If I can prevent you from listening to a song that talks about killing minorities and instead get you to listen to a random song, I think that is a win,” she said.